A Southern California woman claims she was tricked by sophisticated AI-generated videos impersonating General Hospital actor Steve Burton. Over nearly a year, she says she lost more than $80,000 to the scam while believing she was in a genuine relationship.

Fake Video of General Hospital’s Steve Burton Scams Woman Out of $80,000

Key Takeaways:

- The scam relied on AI technology to create realistic videos of actor Steve Burton.

- A Southern California woman was defrauded of $80,000.

- The communication lasted approximately one year.

- The victim genuinely believed she was interacting with Burton.

- The incident highlights the alarming potential of deepfake scams.

Introduction

Deepfake technology has emerged as one of the most concerning trends in digital fraud. In this case, an individual impersonating General Hospital star Steve Burton allegedly convinced a Southern California woman to send more than $80,000 over the course of nearly a year.

Background

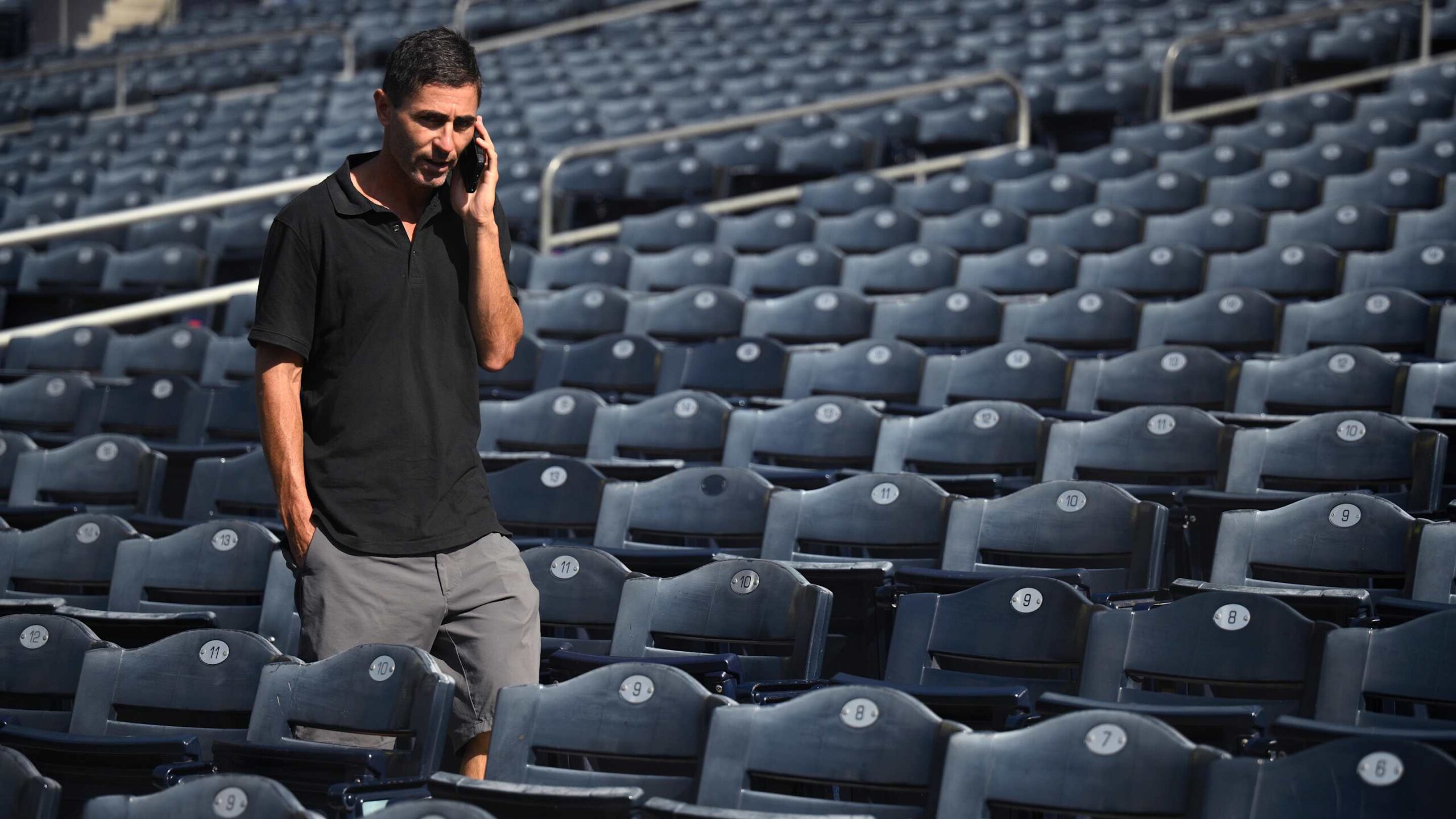

Steve Burton, 55, is best known for his long-standing role on the iconic soap opera General Hospital. While the actor himself had no involvement in this situation, his name and likeness were used by a scammer adept at wielding artificial intelligence to produce highly realistic videos.

The Victim’s Story

The victim, identified as Southern California resident Abigail Ruvalcaba, first encountered the impostor online nearly a year ago. She recalls exchanging messages and video clips with someone appearing to be Steve Burton. The technology was so convincing, she said, that she never suspected she was communicating with a fraudster.

Emotional Toll

In an interview with local station KTLA, Ruvalcaba stated, “I thought I was in love,” reflecting the emotional depth of the deception. The impostor fostered a sense of intimacy, building trust over time and eventually persuading her to send more than $80,000 in total.

How Deepfake Technology Enables Scams

Deepfake videos use sophisticated algorithms to superimpose and synchronize facial movements, making it nearly impossible to identify them as fraudulent on a quick glance. This allows scammers to convincingly impersonate celebrities, business leaders, and even personal acquaintances.

| Potential Dangers of Deepfakes |

|---|

| Highly convincing visuals |

| Hard to detect fraud |

| Emotional manipulation |

| Targets fans and followers |

| Large financial losses |

Conclusion

This incident illustrates the vulnerabilities that arise when powerful technology meets human trust. As deepfakes become increasingly prevalent, authorities warn that online interactions—especially those involving money transfers—should always be approached with caution. While Steve Burton himself had no role in this scam, the misuse of his image underscores the need for heightened vigilance in the digital age.